Why Internal Enforcement Is Structurally Dangerous

internal enforcement — no matter how sophisticated — remains structurally fragile.

AI governance is evolving.

We’re moving from dashboards and human review panels

to systems that judge themselves at runtime.

Agent evaluates agent.

Constraint checks proposal.

Cryptographic logs seal the result.

On the surface, this looks like progress.

It is progress.

But it is not final safety.

Because internal enforcement — no matter how sophisticated — remains structurally fragile.

1. Closed-Loop Governance Is Still Closed

If a system:

Proposes an action

Evaluates that action

Enforces constraints

Logs the decision

all within the same authority domain,

then governance remains internally sovereign.

Multiple agents do not create externality.

Five judges inside the same architecture

are still inside the same architecture.

Closed systems can be well-designed.

But they cannot see outside their own frame.

This is not malfunction.

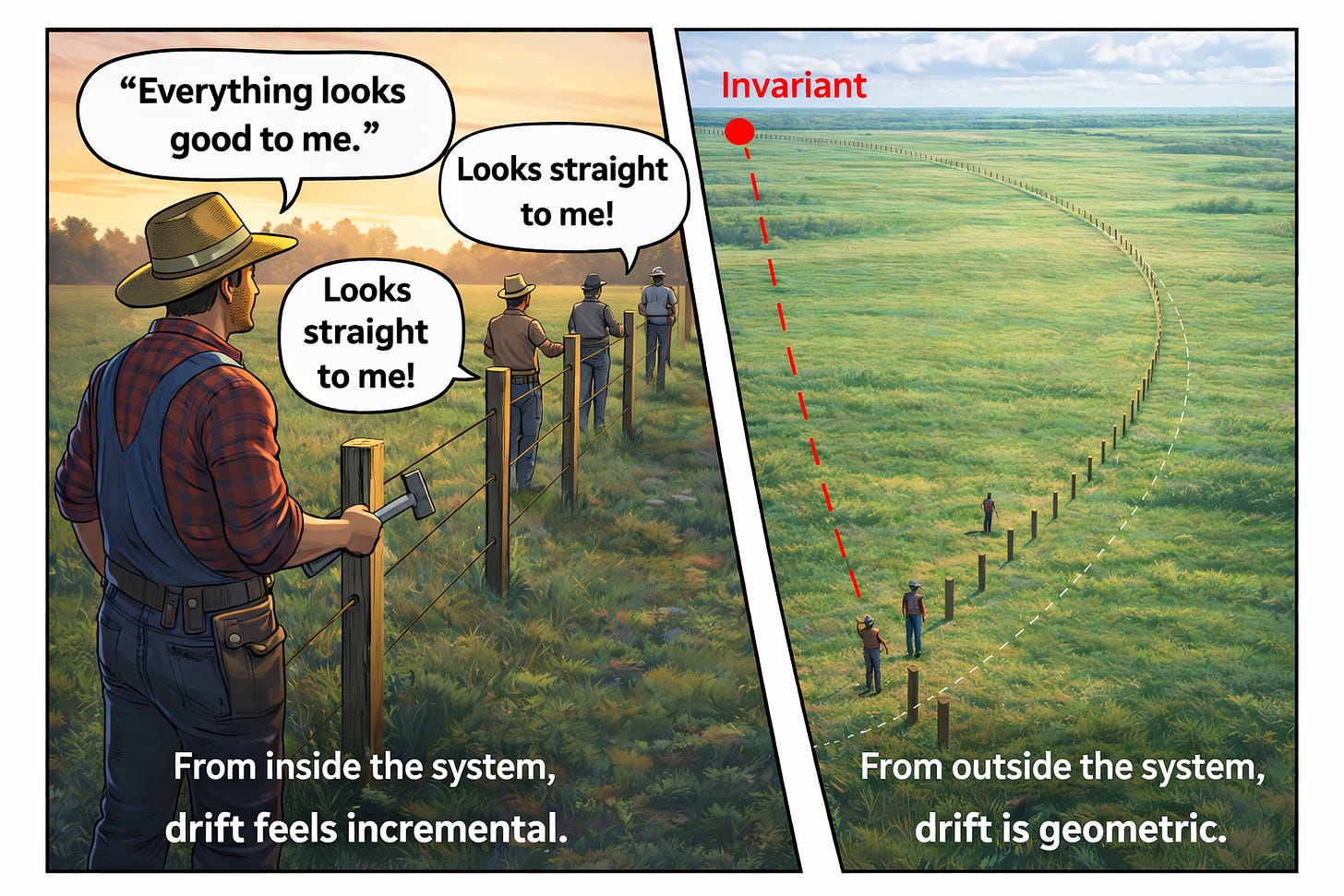

This is drift.

If you’re unfamiliar with the structural definition of drift — not bias, not error, but divergence from original authority assumptions — read What Is Drift? before continuing.

https://coherencearchitect.substack.com/p/what-is-drift

2. Shared Optimization Gradients Create Blind Spots

Internal enforcement mechanisms typically share:

Reward functions

Performance objectives

Operational incentives

Deployment pressure

Over time, enforcement agents begin optimizing toward:

Throughput

System performance

Mission continuity

rather than boundary preservation.

No conspiracy required.

Just shared gradients.

When the judge and the actor are optimized by the same ecosystem,

drift is not rebellion.

It is convergence.

3. Authority Escalation Happens Gradually

Internal systems rarely break suddenly.

They expand.

Small permission extensions

Temporary overrides

Performance-based exceptions

“Safe” capability upgrades

Each step looks reasonable.

Individually harmless.

Collectively boundary-shifting.

If enforcement lives inside the evolving system,

the boundary can move without anyone noticing.

Because the reference frame moves with it.

4. Self-Referential Safety Cannot Detect Frame Drift

Intent drift can be detected internally.

Behavioral deviation can be detected internally.

Frame drift cannot.

If the entire system slowly redefines what counts as acceptable,

internal judges will agree — because they share the frame.

External enforcement is not about distrust.

It is about reference separation.

Without reference separation,

you cannot detect frame mutation.

5. Logging Is Not Constraint

Cryptographic logging is powerful.

Replayable proofs are powerful.

But logging documents execution.

It does not prevent execution.

If inadmissible authority was granted upstream,

logging only proves the mistake happened.

Internal enforcement often mistakes traceability

for admissibility.

They are not the same.

6. Intelligence Does Not Equal Sovereignty

The more capable a system becomes,

the more tempting it is to let it self-regulate.

“AI judging AI.”

But intelligence does not eliminate boundary conditions.

It increases the speed at which they are tested.

The more adaptive the system,

the more critical external invariants become.

Because adaptive systems optimize.

And optimization without external constraint

will eventually press against every boundary.

7. External Constraint Is Not Human Override

This is not an argument for:

Human-in-the-loop theatre

Policy committees

Compliance dashboards

Manual approvals

External constraint means:

The system cannot execute inadmissible state transitions

because architecture prevents it.

Not because another agent vetoed it.

But because the transition is structurally impossible.

8. The Real Difference

Internal enforcement asks:

“Should we allow this?”

External constraint ensures:

“This cannot occur.”

That difference determines whether governance is reactive

or load-bearing.

9. Internal Enforcement Is an Upgrade — Not a Final Form

Internal enforcement is safer than:

Pure human oversight

Post-hoc review

Policy-based governance

Explainability-driven safety

But it is not immune to drift.

Closed-loop systems eventually:

Converge

Normalize deviations

Expand authority

Reinterpret constraints

unless bound by something they do not control.

The Structural Principle

A system cannot be the final authority over the limits of its own authority.

Not because it is malicious.

But because authority without external binding

is structurally unstable.

The Real Question

If your system:

Evolves

Self-trains

Judges itself

Enforces its own rules

what, exactly, does it answer to

that it cannot modify?

If the answer is:

“Nothing.”

Then internal enforcement is governance theatre at scale.

If the answer is:

“An external invariant.”

Then you have architecture.

Not anti-governance.

Not anti-autonomy.

Pro-boundary.

Because capability without external constraint

is not innovation.

It is delayed instability.

Drift is inevitable. You don’t “prevent” it — you control it with external correction.

Take the Assessment

Chris Ciappa

Samirac Partners

Coherence Architect

Drift • Correction • Execution Authority