Why Outcome Engineering Violates the Definition of a Protected Class

Equality in automated systems is about rules, not ratios

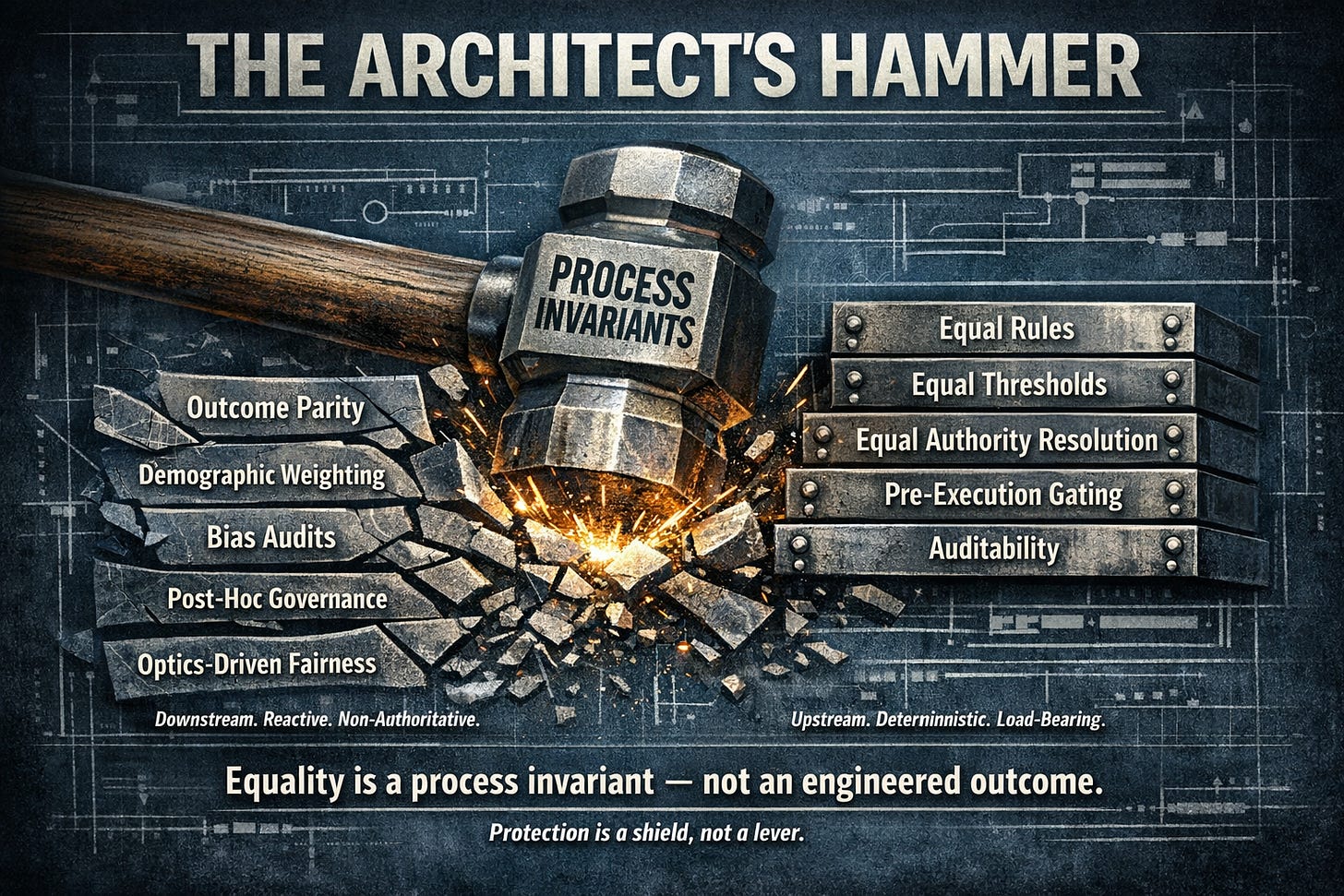

The Architect’s Hammer illustrates a simple systems truth: equality either exists in the rules before execution, or it does not exist at all.

What a “protected class” actually means (legally)

In law, a protected class is:

A category that may not be subjected to discrimination.

That protection imposes a constraint on how rules are applied — before execution.

👉 not a requirement to engineer outcomes.

A protected class means no different rules, it does not mean engineered outcomes.

If a system changes thresholds, weights, or authority by group to force parity,

it is explicitly discriminating by design — regardless of intent.

What equality actually requires

Equality requires load-bearing invariants:

🔹 no different rules

🔹 no different thresholds

🔹 no different treatment

🔹 no different authority resolution

These are architectural constraints, not policy preferences.

Where systems fail

If you attempt post-hoc governance or encode group-based weighting —

the kind of downstream corrections shown breaking under the hammer — you:

🔹 hard-code discrimination

🔹 hide authority decisions

🔹 destroy auditability

🔹 make the system unstable under drift

Protection is a constraint on discrimination, not a mandate for advantage.

It is a shield, not a lever.

👉 Equality in automated systems is guaranteed by equal rules and equal constraints — not post-hoc governance.

👉 Discrimination is determined by the process, not whether outcomes match demographic proportions.

The invariant

Protection is a constraint against discrimination, not a mandate for advantage.

It is a shield, not a lever.

If it cannot survive contact with equal rules and equal authority,

it is not protection — it is compensation.

One invariant, one test

Invariant:

The same rules and authority path apply to all inputs.

Test:

If changing the classification changes the outcome while all other inputs remain constant,

the system is discriminating by design.

Intent does not matter.

Outcomes do not rescue it.

The mechanism has already failed.

Where systems break the definition

If a system attempts to enforce demographic outcome weighting — whether through:

🔹 post-hoc governance

🔹 threshold adjustment

🔹 class-based compensation

—it must:

🔹 encode group identity into decision logic

🔹 apply different rules or weights by classification

🔹 alter authority resolution by class

At that point, the system is explicitly discriminating by design.

Intent does not change the mechanism.

The Bias Audit Fallacy

Bias audits that measure outcome parity without inspecting authority resolution

are auditing optics, not systems.

They assume:

🔹 unequal outcomes imply discrimination

🔹 equal outcomes imply fairness

Both assumptions are false.

What bias audits miss

Most bias audits never ask:

🔹 Who had authority to act?

🔹 What rules governed execution?

🔹 Were thresholds invariant?

🔹 Could the system fail closed?

🔹 Was authority deterministic or probabilistic?

Instead, they operate after execution, where:

🔹 causality is already lost

🔹 authority is already exercised

🔹 drift is already baked in

The architectural reality

A model may predict probabilistically.

Authority cannot be probabilistic.

If class identity influences:

🔹 permission

🔹 thresholds

🔹 escalation

🔹 execution rights

then discrimination is structural, not statistical.

The system consequences (not a moral claim)

Group-based weighting:

🔹 hard-codes unequal treatment

🔹 obscures where authority is exercised

🔹 destroys causal traceability

🔹 makes decisions unauditable

🔹 introduces instability under drift

These are mechanical failures, not philosophical ones.

The correct definition of equality in automated systems

Equality is guaranteed by equal rules and equal constraints —

not by post-hoc outcome governance.

And therefore:

Discrimination is determined by the process and authority structure,

not by whether outcomes match demographic proportions.

Final strike

If people don’t understand this, it’s usually because they’ve been taught to reason about fairness at the outcome layer, where optics live — the layer that shatters first under load — instead of at the process and authority layer, where law, safety, and accountability actually reside.

If equality does not exist in the rules before execution,

no amount of outcome management can make it real after the fact.RELATED READING

AI Governance Fails Because It Starts One Layer Too Late

(deeper layer here around inadmissible states — not just well-defined ones)EDUCATIONAL TRACK

https://www.samirac.com/educational-track

(A guided entry path into the Drift corpus. It is a curated sequence built to help readers move from early recognition, into diagnosis, then mechanism, and finally into institutional application.Begin with the articles that establish the visible pattern, then move into the pieces that name drift, reveal its structure, explain why some people see it earlier than others, and show how it manifests inside real domains.)

When Systems Wobble, It’s Rarely Random

AI hallucinations. Governance failures. Strategy drift.

Different symptoms — same architectural failure.

Over the past year, I’ve mapped a repeatable failure pattern across AI systems, institutions, markets, and organizations, formalized as the Drift Stack.

The diagnostic identifies which layer is failing — and why coherence is being lost.

If you are deploying AI systems that can take action — deny, trigger, flag, enforce, decide — this call determines whether that authority is safe to delegate.

Drift Architecture Diagnostic — $250

A focused 30-minute architectural review to determine whether the issue sits in:

Identity

Frame

Boundary

Drift

External Correction

If there’s a deeper structural issue, it becomes visible quickly.

If not, you leave with clarity.

👉 Drift Assessment Info: https://www.samirac.com/drift-assessment

👉 Full work index: https://www.samirac.com/start-reading

—

Chris Ciappa

Founder & Chief Architect, Samirac Partners LLC

Drift Stack™ · SAQ™ · dAIsy™ · Mind-Mesch™