Where Drift Stack™ Applies — And Where It Doesn’t

Separating learning systems from authority-bearing systems before drift becomes damage

Most debates about AI safety, governance, and drift are stuck for one simple reason:

We keep arguing about AI as if it were one thing. It isn’t.

We collapse learning systems, perception systems, decision systems, and execution systems into a single bucket — and then wonder why conversations about drift, governance, and safety talk past each other.

The disagreement isn’t philosophical.

It’s architectural.

Some AI systems earn legitimacy by learning from reality.

Others must earn legitimacy before they are allowed to act at all.

Once you separate those two classes, most of the confusion disappears.

Two Fundamentally Different Classes of AI Systems

1. Reality-Trained / Learning Systems

These are the systems most people mean when they talk about “AI.”

They improve through exposure.

They learn by accumulation.

They expect error — and correct after interaction.

Correction path:

Reality → data → training → model → behavior → error → more reality

This is the world Yann LeCun and others are correctly describing — and within this domain, they are right.

Examples include:

Perception systems (vision, speech, sensing)

Robotics and physical AI

Recommendation engines

Forecasting and modeling

Content generation

Simulation and experimentation tools

In these systems, failure is part of learning — and usually bounded.

Mistakes cost time, accuracy, or efficiency. They do not usually create irreversible harm.

These systems earn legitimacy through sustained exposure to reality.

And that’s fine.

Where Drift Stack™ and SAQ™ Are Not Critical

This matters, so I’ll be explicit.

Not every AI system needs heavy governance, admissibility checks, or execution-time constraint enforcement.

Drift Stack™ and SAQ™ are generally not required for:

Exploratory research tools

Creative generation

Non-authoritative copilots

Analytics and insight platforms

Advisory systems with no execution authority

Why?

No irreversible state change

No delegated authority

No autonomous execution

A human or external system remains the final arbiter

Errors here are tolerable.

They inform improvement rather than cause damage.

Applying authority-grade governance to these systems would slow innovation without increasing safety.

That distinction matters.

Where Drift Stack™ Is Advisable — But Not Mandatory

There is a middle ground.

Some systems influence decisions without finalizing them.

Examples include:

Internal decision support

Enterprise analytics

Planning and optimization tools

“Human-in-the-loop” automation

Here, drift creates risk, not catastrophe.

In these systems, Drift Stack™ is not about hard enforcement — it’s about clarity:

Surfacing hidden assumptions

Mapping authority boundaries

Identifying constraint conflicts

Detecting early coherence loss

Drift Stack™ acts as an upstream stabilizer, not a gatekeeper.

Where Drift Stack™ and SAQ™ Are Non-Negotiable

This is the core of the work.

Some systems must be governed before execution, not audited after the fact.

These include systems that:

Move or settle money

Grant or revoke access

Authenticate identity

Issue healthcare orders or eligibility decisions

Enforce legal or compliance actions

Control infrastructure (cloud, grid, network)

Deploy autonomous agents with write or execute permissions

In these systems:

Actions are irreversible

Errors propagate instantly

Post-hoc correction is legally and ethically insufficient

“Learning from failure” causes real-world harm

Here, legitimacy cannot come from learning.

It must come from admissibility.

In authority systems, the question is not:

Did the model learn?

The question is:

Was this action allowed to exist at all?

Authority-bearing systems (Drift Stack™ / SAQ™):

Intent → authority → constraints → admissibility → execution → record

Correction happens before consequence.

Proposed action

→ identity resolution

→ authority validation

→ boundary & constraint evaluation

→ admissibility check

→ execution (if and only if allowed)

→ immutable record

→ external correction (if needed)

Learning Systems vs Authority Systems: The Actual Divide

This is the distinction most AI debates miss.

Learning systems earn trust through exposure

Authority systems earn trust through constraint

Learning tolerates drift.

Authority cannot.

That’s not a moral claim.

It’s a structural one.

Drift is acceptable in learning systems.

Drift is catastrophic in authority systems.

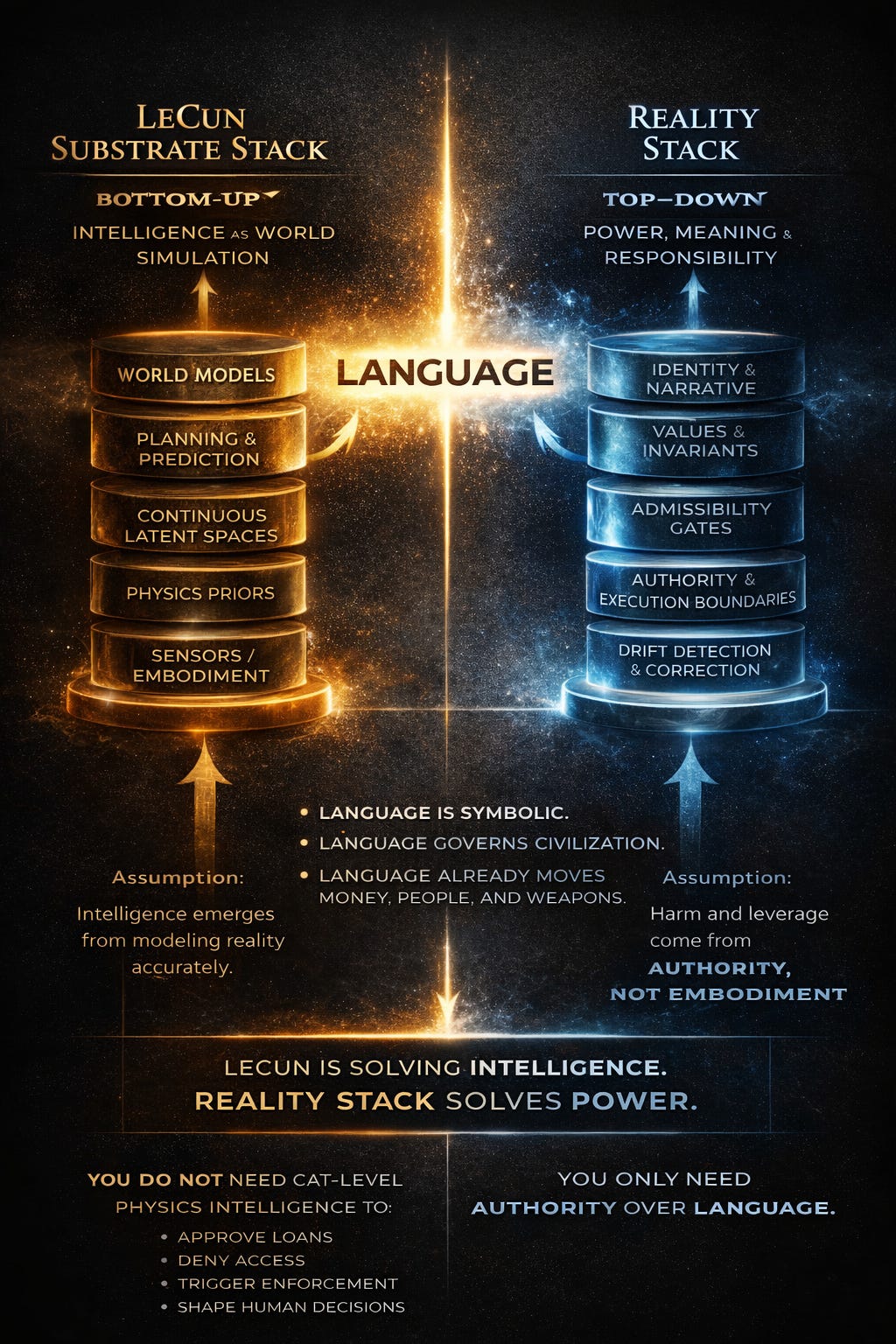

Why Language Matters

Modern systems do not require physical embodiment to exercise power. Authority today is mediated through language: contracts, permissions, approvals, denials, classifications, and policy expressions.

Drift Stack is not concerned with how well a system models the physical world. It is concerned with how symbolic outputs propagate authority, trigger irreversible actions, and alter real-world state.

Side-by-Side (the money shot)

Reality-trained systems (LeCun):

Reality → data → training → model → behavior → error → more reality

Correction happens after interaction.

This is why many AI governance programs feel performative:

They observe outcomes instead of constraining execution.

They audit behavior after authority has already been granted.

That isn’t governance.

It’s documentation.

Authority-bearing systems (Drift Stack™ / SAQ™):

Intent → authority → constraints → admissibility → execution → record

Correction happens before consequence.

Why Governance Fails When It Starts Too Late

For a deeper exploration of why governance must constrain authority before execution — and why observing outcomes after the fact isn’t governance — see my earlier piece:

“AI Governance Fails Because It Starts One Layer Too Late”

👉 https://coherencearchitect.substack.com/p/ai-governance-fails-because-it-starts

Precision Beats Universality

Drift Stack™ is not a universal AI framework.

It is an authority framework.

It applies exactly where systems cross from possibility into consequence — where actions cannot be safely learned by breaking reality.

The future of AI will require both:

Systems that learn from reality

And systems that are not allowed to learn by harming it

Confusing those two domains is why the conversation keeps failing.

Separating them is how we move forward.

Correction after the fact isn’t safety.

It’s damage control.

AI hallucinations. Governance failures. Strategy drift.

Different symptoms — same architectural failure.

Over the past year, I’ve mapped a repeatable failure pattern across AI systems, institutions, markets, and organizations, formalized as the Drift Stack™.

The diagnostic identifies which layer is failing — and why coherence is being lost.

If you are deploying AI systems that can take action — deny, trigger, flag, enforce, decide — this call determines whether that authority is safe to delegate.

Drift Architecture Diagnostic — $250

A focused 30-minute architectural review to determine whether the issue sits in:

Identity

Frame

Boundary

Drift

External Correction

If there’s a deeper structural issue, it becomes visible quickly.

If not, you leave with clarity.

👉 Drift Assessment Info: https://www.samirac.com/drift-assessment

👉 Full work index: https://www.samirac.com/start-reading

—

Chris Ciappa

Founder & Chief Architect, Samirac Partners LLC

Drift Stack™ · SAQ™ · dAIsy™ · Mind-Mesch™